Testing stops after one triggers the error. Screenshot of test results for student answer causing runtime error. Test results for a student answer that caused a runtime error \Description The code defines the function, initialises a counter at 0, runs a for loop over the list, in each iteration increases the counter by that number of words, then after the loop divides the counter by the number of words and returns this as output. Screenshot of code which solves the example question.

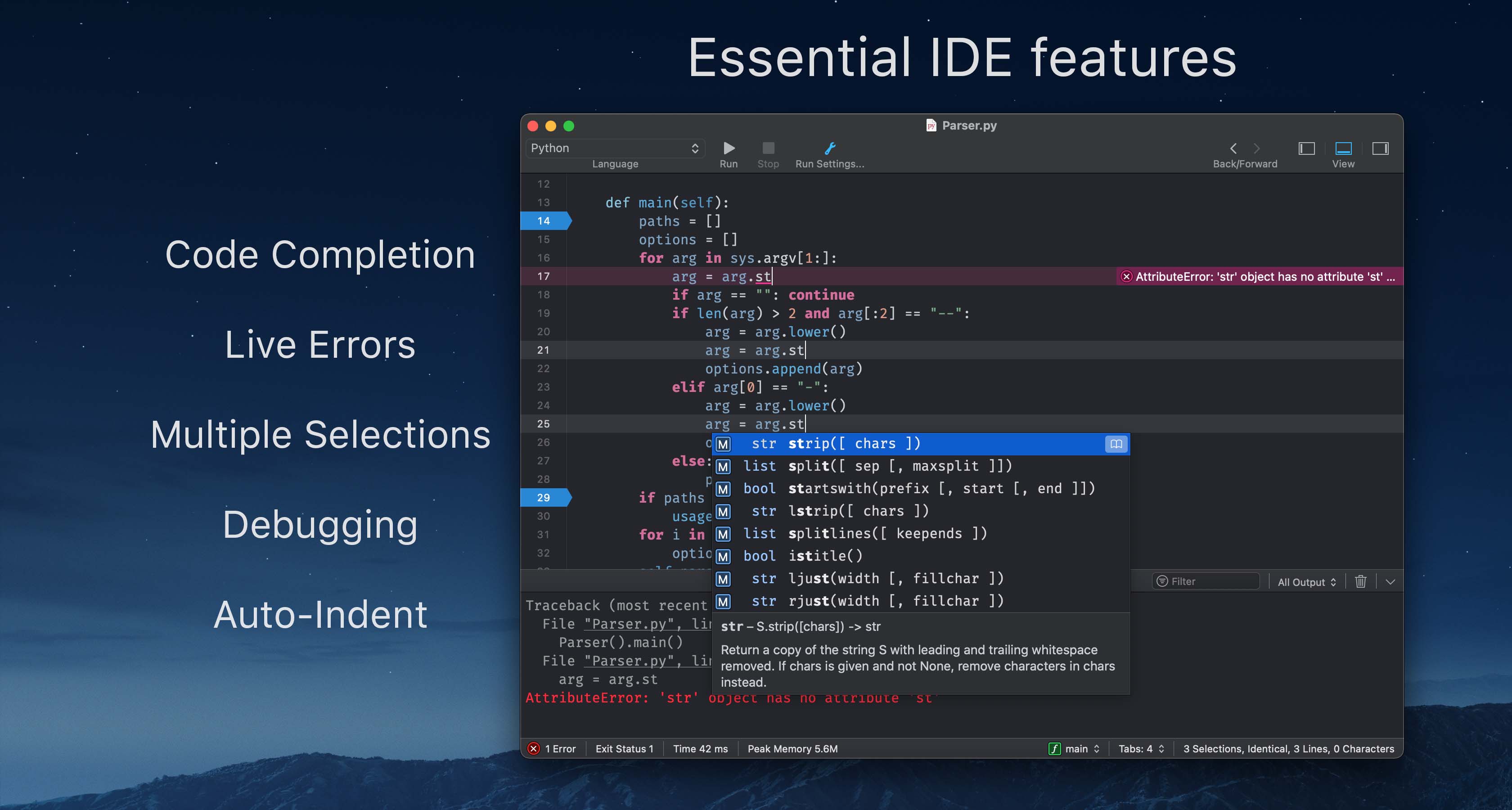

Example of a student answer to the question in Figure 1 that would pass all of tests (the opaque rows are hidden tests which would not be seen by the student) \Description Do not use a while loop or recursion or the map function. The function should return a float with the average number of letters per word in the list. The questions reads as follows: Define a Python function called avgWordLength which takes a list of strings as its input argument. Screenshot of an example CodeRunner question with the box underneath for students to enter their code. Example of a CodeRunner question \Description This reflects our view that errors (1)-(3) are not critical gaps in student knowledge, so long as they are able to read the error messages and unit test feedback to correct them quickly.įigure 1. They would have only used Check twice and thus receive no penalty, getting full marks for the question. If students then added in the return statement and pressed Check again they would pass all tests as in Figure 2. Our positive results with automated formative feedback (Croft and England, 2019) led us to consider the same approach for summative assessment. The additional verbosity required for C code meant that 4003CEM has no written exam. no syntax checker, no access to language documentation, no ability to edit the code without writing it all out again. 4000CEM has also a written exam which does assess these abilities, however, this is an inauthentic environment different to both the one in which students learnt the material and any professional environment in which they would later apply it. Such questions can assess knowledge of programming concepts and theory, but not the ability to formulate an algorithm and code to solve a problem. We use Moodle quizzes, but prior to 2018/19 these employed only the standard Moodle question types like multiple choice. Given the mid-semester time, tight feedback deadlines, and large quantity of students, an auto-marked mid-term was essential (especially with the human feedback committed to the projects). We hence think there is value in sharing our experiences. CodeRunner has the potential to become the standard automated code assessment tool of choice, but to the best of our knowledge the only publication on CodeRunner is the 2016 introduction by the developers in ACM Inroads (Lobb and Harlow, 2016). Further, it is fully approved by Moodle which means installation is easy, both technically and in terms of administration 2 2 2The approved plugin status meant our IT team team would add it to our Moodle installation without any major review. In contrast, CodeRunner was developed as a plugin for the very widely used learning management system Moodle 1 1 1According to the following 2017 report Moodle is used by an absolute majority of HE institutions in Europe, Latin America and Oceania and a quarter in North America. However, it seems historically there are a wide range of independent tools with few used very widely beyond the institutions which created them. The use of automated assessment for coding is of course not a new topic − see for example the survey (Ala-Mutka, 2005).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed